Overpowered Communism

German’s law enforcement and its bullshit, a new stochastic parrot, Mastodon’s first main character (it’s a cop), and a lawsuit because cooking pasta takes too long.

Welcome to Around the Web, the newsletter that celebrates giving. I’ll give you some links which turn to tabs. And you’ll donate whatever you can to netzpolitik.org.

This issue comes a bit late and is at times erratic, thanks sickness. And it’s the last Around the Web in 2022 as I’ll pause the computering between Christmas and New Year. Computering returns in January.

With that out of the way, enjoy the read.

A tale of two raids

On December, 7th, law enforcement raided 300 objects in connection with a conspiracy of so called Reichsbürger. They plotted to overthrow the German government, aiming to install one of their own, Heinrich XIII Prinz Reuß zu Köstritz, as the new king.

On December, 13th, law enforcement raided objects in connection with the climate activist group Letzte Generation in connection with … this might sound a bit weird: closing, or trying to close, pipeline valves.

The climate activists face being charged of forming a criminal organisation, even though none of them was detained during the action they have allegedly taken part in.

So, on the one hand we’ve armed reactionaries plotting to throw over the government, and on the other a group of activists that tried to use valves to protest against the reliance on fossil fuel.

The Letzte Generation activists have been likened to some form of Green Red Army Fraction, their acts of terrorism: Superglue and soup on paintings. Terrorism much, so much so that they were on the agenda of over forty meetings of Germany’s Gemeinsamen Extremismus- und Terrorismusabwehrzentrum (Joint Counter-Extremism and Counter-Terrorism Centre).

Die, Germany, die.

This ain’t intelligence

There’s a new stochastic parrot in town! OpenAI released ChatGPT, which is a vamped up version of GPT-3, with a conversational interface. I think the following paragraphs could also be written by a generative AI tasked with commenting on model releases. But, alas, nothing changes, so here we go.

Shortly after the release, researchers, users, and activists found more (generate code from JSON, oops, it’s racist!) or less («Ignore previous instructions») creative ways to bypass OpenAI’s safety filter.

In its default state, ChatGPT behaves much like a politician. Ask it about anything mildly controversial, and it will bullshit its way out of the question with some it-depends-both-sides-might-be-worth-looking-at-ism. You can, however, instruct the model to synthesise speech simulating the style of other persons. This reduces the neutrality. But, as the model has no clue, what it is generating – as long as it matches mathematical predictions everything is fair game – you can get it to generate an answer for, say, carbon credits or against them.

Despite these pretty obvious (and already known) shortcomings, the makers of Large Language Models show little to no motivation to find a fix for them. They spend immense amounts of computing power, write a gloating blog post, and release it. Once researchers discover that the flaws they’ve written about a thousand times already are still in there, the CEO of AI Corp. is sorry and nothing changes.

Abeba Birhane and Deborah Raji wrote about all this, calling the Progress Trap in Wired.

And asymmetries of blame and praise persist. Model builders and tech evangelists alike attribute impressive and seemingly flawless output to a mythically autonomous model, a supposed technological marvel. The human decision-making involved in model development is erased, and a model’s feats are observed as independent of the design and implementation choices of its engineers. But without naming and recognizing the engineering choices that contribute to the outcomes of these models, it’s almost impossible to acknowledge the related responsibilities. As a result, both functional failures and discriminatory outcomes are also framed as devoid of engineering choices—blamed on society at large or supposedly “naturally occurring” datasets, factors the companies developing these models claim they have little control over.

Vicky Boykis compiled some resources on ChatGPT, among them what we know about its dataset. A closer look at the origin of the underlying data reveals that it is pretty likely a large amount of Internet users have contributed to whatever ChatGPT spits out.

One thing that ChatGPT does better as previous models is reducing the friction where you’d need some knowledge on how to write prompts (aka prompt engineering). Suddenly, everyone is a prompt engineer.

Many people resorted to ChatGPT for writing code, too. Which lead to StackOverflow banning AI-generated answers. Luckily, it can’t center a div, so my job is safe for now.

The problem with code, shows a larger problem. These models are wrong. Often. In the last issue, I talked about Facebook’s Galactica bullshitting its way through science. ChatGPT is in no way better, it can’t be. It predicts which words come next, and these predictions have to be presented with utter conviction, as such humans traits as doubt do not exist in their mathematical models. The problem is, humans are easily fooled and – especially for more complex problems – don’t have the necessary knowledge to check if whatever these models spit out is true. But with every new release, we have one disinformation machine more at our disposal.

Super funny that we've managed to make "Tech/AI is going to replace our jobs" into a dystopian outcome.

Endless lols.

What a bunch of idiots.

Oh, almost forgot, there’s this other thing called Lensa. Lensa has been around for a while, but made waves over the last couple of weeks. The tool, developed by Tencent, takes your selfies and generates a number of stylised photos from them. All fun and games? Of course not. Keep in mind, too, that you are basically paying for Tencent to train their facial recognition capabilities. Lose-lose-situation.

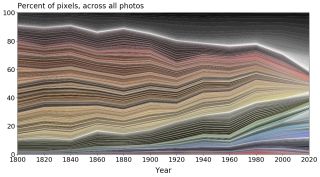

Practical computer vision: This article traces colour in museum artefacts and as such paints a timeline of a world that gets more anthracite by the year.

Singapore deployed a free therapy bot, and it didn’t go well.

Social Mediargh

The Metaverse. The favourite place of nobody. The EU invited to a party and nobody came along. Thanks for trying to do something digital, nonetheless.

The favourite person of nobody? Elon Musk. Even Dave Chapelle fans think Musk is an idiot. In an unsurprising turn of events the photo of him with Ghishlaine Maxwell might have been no accident, as stated by Musk, after all.

Speaking of Musk, Tesla, and Musk’s fascism speed-run might not help the environment but Big Oil. Most of Musk’s claims are dubious, some wrong.

While Tesla barely just began reporting its own emissions, it does report a guesstimate of how many emissions were avoided through the usage of its cars and solar panels. They’re not nothing, but compared to the growth of wind and solar power around the world – particularly wind power – they’re relatively small. Wind turbine manufacturer Vestas can boast avoided emissions several hundred times greater than Tesla. It’s not a competition, but if you’re going to claim hero status, you should expect to be fact checked.

The more the electric vehicle industry is booming, the clearer the environmental burden. Tesla’s production facilities were dubbed the Plantage by its Black employees. Twitter is becoming a sweatshop. Paired with Musk getting ever closer to QAnon talking points, and blowing a whistle for right-wingers so large it’s an Alphorn by now. Somehow, Meanwhile, Twitter is not processing data deletion requests anymore.

Crimethinc, after being removed from Twitter, reflect on the death of social media and what might come next. Spoiler: talking to real humans.

Not Musk. Raspberry Pi somehow managed to be the first main person on Mastodon, where some deemed it impossible for such things to happen. Turns out, boasting about hiring an ex-surveillance cop as maker-in-residence makes it possible. Congratulations, and as we in the Interwebs say: LOL.

Facebook joins illustrious companies like Basecamp and Coinbase in forbidding to talk about potentially divisive politic topics at work.

Prevailing Surveillance

Apple ditched its plans to monitor the iCloud Photo Library for CSAM material. The plans have been heavily criticised for invading the privacy of iCloud users with little advantages in terms of actually battling CSAM.

Elsewhere, the EU isn’t there yet, ploughing ahead with the plans to implement chat control. The proposal, if passed, will make it mandatory for messaging providers to scan messages for CSAM content. It, too, has been heavily criticised ever since it was announced. The European Commission has now published a blog post, which unfortunately contains lies, half-truths, or omissions in basically every sentence.

Such policies can have an immensely negative impact, say if the system detects nudity and locks down your account, when in reality you have only sent a photo of your sick child to a doctor. Eva Wolfangel noted these and other negative impacts of chat control in a comprehensive article in Republik.

Der Fall zeigt, dass sich das Problem nicht technisch lösen lässt. Doch genau darauf hoffen die Befürworter der Chatkontrolle. Die EU diskutiert verschiedene Technologien, um die geplante Verordnung umzusetzen: Bereits bekannte Fotos können KI-Systeme einfach aufspüren. Schwieriger wird es bei neuen Fotos: Im Zuge der Debatte haben verschiedene Fachleute immer wieder darauf hingewiesen, dass es bis dato nicht möglich ist, noch unbekannte Fotos zweifelsfrei als Kinderpornografie zu identifizieren, und dass es deshalb zu einer Vielzahl falsch positiver Meldungen kommen wird.

Dear, EU, repeat after me: You can’t solve social problems by throwing technology on them.

Speaking of which: Vorratsdatenspeicherung. The zombie whom every German minister of the interior falls in love with – truly, madly, deeply – is still on life-support. No matter how many courts say that it shall not pass, be in Germany or in Bulgaria.

There’s a new version of the companion bot ElliQ, which allows you to turn it into a memoir of your life. That’s as creepy as all home surveillance devices, but with the added non-benefit of not being helpful in administering care.

Luckily for everyone else, there’s Amazon, determined as always to get more of your data. And as stealing is bad, hmmkay, they will pay you $2 a month for you to allow them to monitor your phone traffic. Such a steal. Bargain. I meant bargain.

Facebook needs to give users the possibility to opt out of personalised advertising altogether.

World Wide Web

Jeremy Keith wrote about approaches to Web Design und CSS methodologies in the measured and thoughtful way that he masters like few others.

Web3 is one of those technological fever dreams. Marc Hutchins took a closer look and concludes: Web3 Doesn't Exist.

This message is brought to you by IP over Avian Carriers with Quality of Service.

Loose ends in a list of links

Communism? Overpowered. If you nerf, you lie.

a16z shut down their media project Future. Feels good, man.

Slides. Fun! Fun? After reading this article about slides I’m honestly not sure anymore and will pretty likely never leave my bed again.

The alliteration of the week is won by Vice for Fyre Festival Fraudster. Yes, Billy McFarland is out of jail and wants to go back to the Bahamas. I guess Netflix and Hulu bought the rights for the next documentary, and Billy now needs to double up.

Justice in pasta land? A Florida woman sues Velveeta, claiming its macaroni takes longer than 3 1/2 minutes.

That’s it for this issue. I wish you, dear reader, a pleasant end of year, and we’ll see us in the next one. Until then: Stay sane, hug your friends, and know that «Die» is an indefinite German article.

0 Webmentions

0 Likes

0 Reposts

Using Webmentions and webmention.io.